DEAL ZONE

LATEST POSTS

The Canon RF 14mm F1.4L VCM is Right Around the Corner

I have been talking about two more VCM lenses being on the horizon for a while now, with one of them being wider than the RF 20mm f/1.4L VCM and another longer than the RF 85mm f/1.4L VCM. It looks…

Canon EOS R6 Mark II $1699, R5 $2339, EOS R50 V Kit $639 and More

Canon USA has restocked some of their refurbished RF gear inventory. There are some great deals to be had if you're in the market for some camera wares. The EOS R5 at $2339 is a great price for a camera…

Is The First Canon VCM L Zooming Coming in March?

The first half of 2026 announcements from Canon appear to be reaching more people before the official announcements. The last of the major camera tradeshows, CP+ begins February 26, 2026, and runs through March 1, 2026. I do expect an…

More Canon RF 35 f/1.2L and RF 24 f/1.2L Optical Designs

In this patent application (2026-013481), Canon has several interesting embodiments that we will explore here. These embodiments look very much like something I'd expect to see in a production halo RF 35mm f/1.2 prime. Lots of elements and dual focus…

CANON EOS R7 MARK II ROUNDUP

MORE NEWS

The Canon RF 24-105mm f/4L IS Just Got Better With New Firmware

This is a confusing mess of a firmware update from Canon for the RF24-105mm F4 L IS USM. Times like this make me wonder what is really happening over there. Nonetheless, this is a worthy update to the Canon RF…

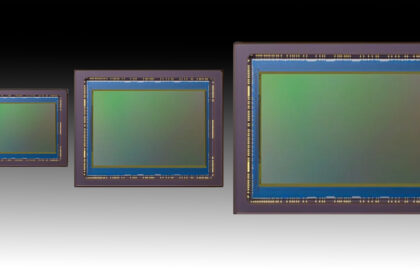

The R7 Mark II with 39MP: It Sounds Spot on – and here’s why it makes sense

Okay, so the rumors are sounding as if it appears that the Canon EOS R7 Mark II is indeed set to arrive in the first half of 2026 with a new 39MP APS-C sensor of BSI variety, either stacked or…

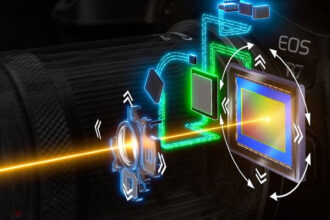

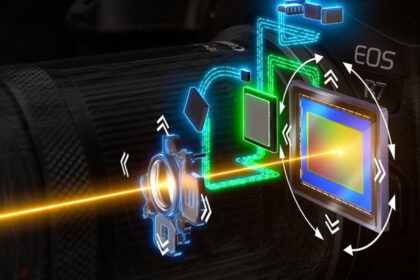

Canon's Image Stablization Innovation

This article looks through Canon's history and innovation in the realm of image stabilization. So sit down, grab a coffee, because this is a long article. Canon’s image stabilization (IS) systems have transformed photography and videography, delivering sharp images and…

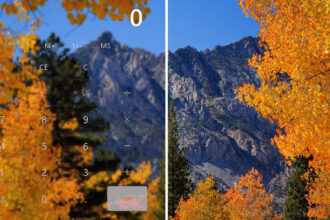

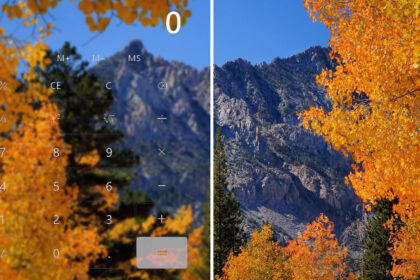

Advanced Sensor Diffraction Limit Calculator

A long-running commentary, I think some of you have seen me talk about, is that high MP cameras are not as diffraction-limited as you may first think. That's because a lot of you have been using a very simplistic calculator…

MORE NEWS

The 10 Most Important Canon PowerShot Cameras Ever Made

Following my list of the 10 most important EOS Digital cameras ever, I figured I'd do the same thing for PowerShot. Don't worry, there is no top 10 printer or camcorder list coming. As we all know, the compact market…

The Annual 2026 BCN Awards – Kodak Dominates

The annual BCN awards are out. These awards are based on Japanese retail sales data. There are winners and losers, and perhaps the most interesting is that Kodak is the #1 compact camera retailer in the Japanese Market, so while…

Canon Cinema EOS C50 First Impressions

I have Canon's latest Cinema camera in the office, and I'm putting it through its paces. Is this the next great filmmaker's camera?

The 10 Most Important Canon EOS Digital Cameras of All-Time

Over the last little while I have been going down some historical digital camera development rabbit holes. I have been around for most of the evolution of digital ILC cameras when I started out with the 300D in 2003. This…